Stop letting AI be the CMO: use it for pattern recognition, not judgment

AI can analyze 60,000 reviews in 20 minutes. It can't tell you what they mean for your brand. That's still your job.

You spent hours on it. You reviewed the fee structure from the client's POV, stress-tested every objection, and ran it through every scenario you could think of. You walked into that meeting prepared. Confident. Ready.

The client pushed back in a way you hadn't anticipated. A week later, you looked at the numbers again and realized you had set the highest bonus threshold below what they had already achieved the previous year. They had been right the entire time. You lost the contract. At their run rate, that was $150,000.

That's what happened to Kasey Luck, CEO of Luck & Co Agency. Not because she didn't prepare. Because she let AI make the judgment call that was hers to make.

Her Unspam 2026 talk was about that mistake, the framework she built after it, and two workflows that changed how her entire agency operates. Here's what she covered.

First, the expensive part

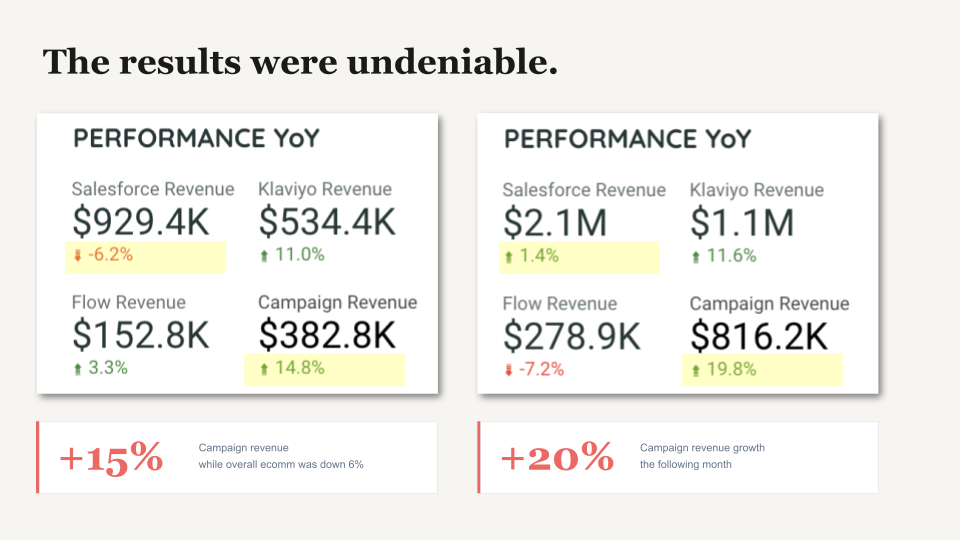

The results were undeniable.

Month one: campaign revenue up nearly 15%, while the client's overall website was down 6%. Month two: campaign revenue up 20%, site essentially flat. Performance-based bonus structure. Luck & Co received $0.

So they renegotiated. Kasey spent hours with AI on the new fee structure, reviewing it from the client's POV, stress-testing every objection. She walked in prepared. The client pushed back in a way she hadn't anticipated. She defended her structure.

A week later, she looked at the numbers. She had set the highest bonus threshold below what the client had already achieved the previous year. The client had been right all along. The AI had sounded right. Those are not the same thing.

What's in it for you: this is not a cautionary tale about AI being bad. It's a cautionary tale about job descriptions. Keep reading.

How most teams have it backward (including yours, probably)

After losing that client, Kasey audited every AI task running at her agency. She stopped asking "can AI do this?" (it can do almost anything) and started asking a different question: should AI be making this call?

What she found: her team had quietly promoted AI from intern to decision-maker. Nobody had noticed. Nobody had decided. It just happened.

She asked the room at Unspam to raise their hands. Almost everyone had used AI to write email copy in the last week. Fewer had used it for campaign strategy. Almost no one had used it to synthesize what actually worked across the last six months before planning the next season.

Then she shared what she hears when she talks to people in the industry:

- "AI is really bad at subject lines. It just repeats the same formulas over and over."

- "It's not useful for more refined strategic work. Even with good prompts, it still doesn't match what I can do as a human."

- "I'm still trying to figure out how to use it as a second brain for strategy." (That last one is both the aspiration and the problem, all in one sentence.)

One person said something completely different: "The strategy is always mine. So is the shitty first draft. I don't follow its lead. I make it follow mine."

Same tool. Completely different relationship. The difference is that this person had drawn a line that most people haven't.

What's in it for you: figuring out where that line is will save you from a very expensive meeting with a very patient client who is about to be right about everything.

The line (it's simpler than you think)

Pattern recognition vs. judgment

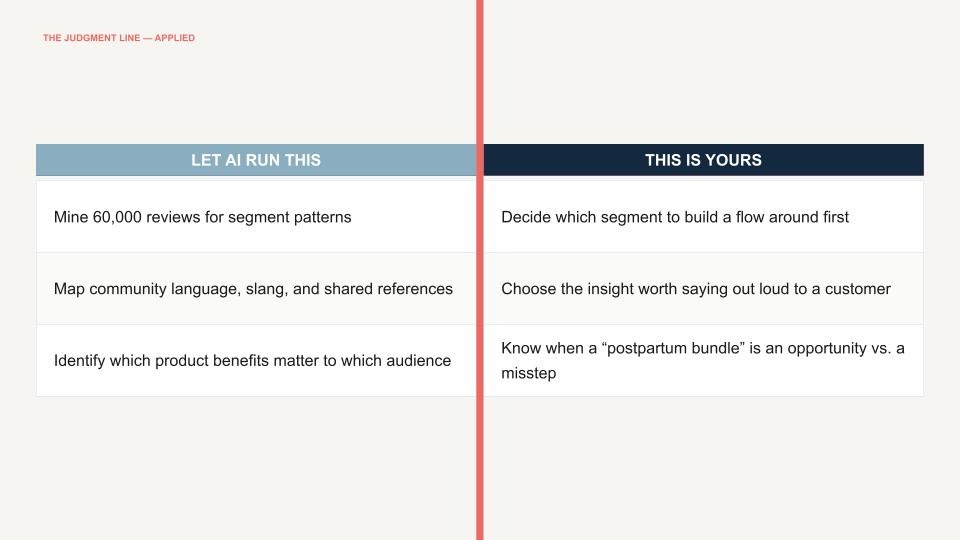

Every task in email marketing requires one of two things: pattern recognition or judgment.

Pattern recognition is the process of finding the signal in a sea of data. Judgment is deciding what to do with that signal.

AI is extraordinary at pattern recognition. It can analyze more customer reviews in 30 seconds than you can read in a month. Judgment, the ability to look at something technically correct and know it's still wrong, is where AI often fails. And when it fails, it sounds just as confident, just as smooth, and just as logical as when it's right. (That last part is the dangerous bit. A confused intern looks confused. AI just keeps going.)

The line Kasey drew is simple: AI finds the signal. You decide what it means.

Before you reach for AI, ask yourself: does this task require pattern recognition or judgment? If it's pattern recognition, AI should probably be doing it faster than you. If it's judgment, that's your job, and you can't afford to outsource it.

What's in it for you: a one-question filter that stops you from spending three hours with AI on something that was going to need your brain anyway.

Workflow 1: 60,000 reviews, 20 minutes, one insight nobody had named

Here is something that happens at every new client kickoff: someone tells you who their customer is, you nod, take notes, and trust them. They've been doing this longer than you have.

What if you could verify it? Not from a call. Not from a survey someone ran 18 months ago. From tens of thousands of actual customers.

Kasey's agency recently started working with a skincare brand. After a 90-minute kickoff call, the brand team had walked them through five customer segments in the scattered, chaotic way brand teams usually do. Mothers are a big segment. Deodorant is how people come in. Gifters are small but loyal. Clean living is emerging.

After the call, she downloaded a CSV from their Yotpo app: 60,208 reviews across roughly 230 products, covering 18 months of data. She fed them to Claude and ran two prompts. The whole thing took 20 minutes. (Give or take 10 minutes of fighting with it a little, as she put it.)

The first output was a spreadsheet of 270 representative reviews organized by category: benefits, pre-purchase anxieties, competitive contrasts, plus sub-themes Claude identified on its own. Useful for anyone who needs to understand the customer quickly and find the right language for a campaign or a flow.

The second output was a Google Doc with five audience segments Claude had identified from the reviews. Roughly the same five the client had described on the call, but extracted without any context from that conversation and with descriptions that were, in her words, "deliciously rich."

When Claude slipped

She almost trusted it completely. Then she asked Claude to show its work. The table it returned showed that the first segment, described as "the highest volume segment by far," accounted for 0.4% of total reviews. Claude had quietly slipped judgment into what was supposed to be pure extraction. She caught it because she asked.

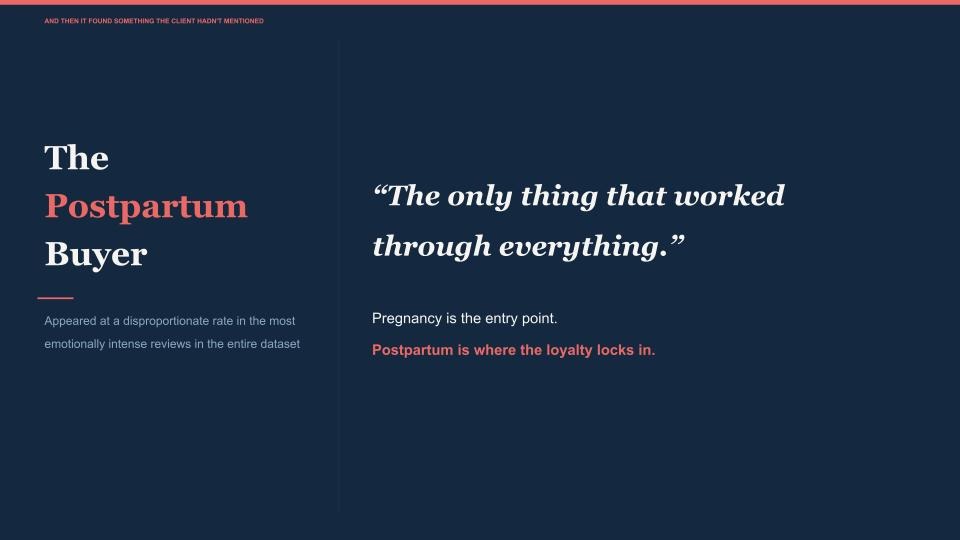

Two things came out of that analysis that actually mattered. First, when the client's VP of Marketing challenged the welcome flow strategy, her team had extracted customer language sitting on every strategy card, pulled directly from real reviews. The VP listened. Second, one insight surfaced that the kickoff call had never flagged: the postpartum buyer appeared disproportionately often in the most emotionally intense reviews across the entire dataset. The client had said pregnancy was the entry point. The data showed that postpartum was when loyalty walked in.

That shifted the conversation from "here's the welcome flow we recommend" to "should we introduce a postpartum bundle?"

A research firm would have charged around $30,000 for this analysis and would have taken multiple weeks to complete it, working from a sample. The Claude workflow took 20 minutes and worked from the full dataset.

What's in it for you: you walk into the strategy call knowing something the client doesn't know about their own customers. That changes the conversation.

Workflow 2: fluent from day one (reef donkeys included)

The second workflow is about onboarding to a brand you know nothing about.

A strategist on Kasey's team got handed a fishing brand. Slow-pitch jigging specifically, which is a very niche community of fishers with its own slang, culture, and gear debates. She had never fished a day in her life. She spent 30 minutes in a conversation with Claude. What came out was a nine-page brief, not a summary of the brand's website, but intelligence pulled from forums, YouTube comments, and Facebook groups. The community was 95% male, with an average age of 35 to 40. Think golf, but on a boat. The brief included who they trust, what they don't forgive, and a seasonal calendar of which species run when, and which ones you can't legally tell people to keep.

It also included the slang. A reef donkey is an amberjack. A smoker is a big kingfish. A drop is when you let the jig sink. And "one last drop" is what these guys say to each other at the end of every trip before calling it a day. One prompt addition made the brief more trustworthy: the strategist asked Claude to surface only insights backed by at least two sources. Claude caught itself presenting a few things as community-wide norms when they weren't, and self-corrected.

This document doesn't write the campaign calendar. It doesn't decide which angle to lead with. That's still the work. What changed is that the strategist who used to walk into monthly meetings asking the client to explain their own world now walks in fluent, with ready-to-go campaign concepts based on seasonality she already understands.

What's in it for you: your strategist stops being a student in client meetings and starts being a peer. From day one.

The only question that matters

Kasey closed where she started. She didn't use AI wrong. She used it in the wrong place. She promoted it to a job it was never supposed to have. If she had kept it in the intern role on the day of that contract negotiation, she would still have that client.

The tool isn't the problem. The assignment is.

AI finds the signals in 60,000 reviews. You decide what those signals mean for your brand, your client, and the season ahead. You got into email marketing because you understand people. AI doesn't understand people. It predicts them. That distinction can be your edge, if you don't give it away.

Two things to do when you get home: identify which pattern recognition tasks you've been doing manually and hand them to AI. Then identify which judgment calls you've been outsourcing to AI and take them back.

Subscribe to our newsletter.

Dive into the world of unmatched copywriting mastery, handpicked articles, and insider tips & tricks that elevate your writing game. Subscribe now for your weekly dose of inspiration and expertise.